I don’t know what I’m looking for: Better understanding public usage and behaviours with Tyne & Wear Archives & Museums online collections

John Coburn, Tyne & Wear Archives & Museums, UK

Abstract

Digital collections interfaces have been traditionally designed for audiences with an established interest and motivation to probe the collection for specific objects. There are still relatively few user-centric interfaces that encourage casual browsing and a greater exploration of collections from a broader cross-section of the public. In 2014–2015, supported by the Digital R&D Fund for the Arts, Tyne & Wear Archives & Museums (TWAM) undertook a digital research and development project with Microsoft Research and Newcastle University to explore the following question: “Can cultural organisations with a digital collection improve public access and engagement with their online collection through the adoption of Web interfaces and systems that more creatively provoke and respond to an audience's exploration of content?” A user-centric collections interface was developed to explore this, and a software development kit was produced to allow the wider heritage sector to adopt the system for their own collections. Ongoing user research and evaluation informed every stage of the system’s development and yielded key insights that recommended new directions for TWAM and potentially the wider sector. This paper will explore the results and contextualise them against typical online collection formats and their perceived limitations, as well as other research in the field. It will investigate the public impact of an interface designed to inspire prolonged and open-ended browse, rather than enable directed text search. This paper will argue that there are clear opportunities to deeply engage audiences, which have limited knowledge and inclination to search object catalogues, with visual collections interfaces that manufacture a public interest in "discovering the unknown." As well as recommending design methodologies for developing digital collections, this paper will also ask questions about how this gathered intelligence can positively influence the practice of the museuKeywords: collections, search, browse, serendipity, visualisation, user centric

1. Introduction

Over the past two decades, the cultural heritage sector has invested heavily in digitising its physical collections and publishing online as vast catalogues of information, and increasingly as data to be reused and repurposed. Collections discovery interfaces have traditionally been designed to support audiences with an established interest and motivation to look for objects. There has been significantly less investment in user-centric and curated online collections experiences for audiences less inclined to search.

Nick Poole (2013), chief executive of Chartered Institute of Library and Information Professionals (CILIP), states that despite the efforts of mass digitisation to make cultural heritage more widely available to the public, the key big data challenge for the sector remains “significantly improving tools and capability for data curation.”

Tyne & Wear Archives & Museums (TWAM) is a major regional UK museum, art gallery, and archives service that manages a collection of nine museums and galleries across Tyneside and the Archives for Tyne and Wear. It holds collections of international importance in archives, art, science and technology, archaeology, military and social history, fashion, and natural sciences. There are over 1.1 million objects in its collections.

Following an application in 2013, TWAM was awarded funding as part of the Digital R&D for the Arts Programme (supported by Nesta, Arts & Humanities Research Council and public funding by the National Lottery through Arts Council England) to research and develop a system that explored this research question: “Can cultural organisations with a digital collection improve public access and engagement with their online collection through the adoption of Web interfaces and systems that more creatively provoke and respond to an audience’s exploration of content?”

The fifteen-month-long project was developed with research partner Newcastle University (NU), technical partner Microsoft Research (MSR), and supporting arts partner Collections Trust (CT). NU and MSR collaborated on both the research and Web development activities. The partners agreed to produce two key outputs:

- A Web interface intended to create a compelling collections experience for non-research audiences

- A software development kit (SDK) to enable cultural organisations to reuse the developed system for their own online collections

It was the aim of the project to focus on two audience types:

- Online audiences that browse rich content on cultural websites and social media but have limited motivation for searching object catalogues

- Cultural heritage professionals who desire open-ended browse functionalities to discover new objects for use in museum activities

TWAM undertook the project to expand audience reach for its online collection and to better understand audience motivations for search. NU and MSR were also particularly interested in the development of exploratory browse interfaces that “shift the focus of research towards understanding the behaviours and preferences of users engaged in exploratory searching, and on measures of exploration success” (White et al., 2006). Online search was a key research theme for MSR, and their team had already developed prototypes to better understand and design for new search behaviours (http://research.microsoft.com/en-us/projects/web).

Over five hundred thousand records were already searchable through TWAM’s catalogue search interface (http://collectionssearchtwmusuems.org.uk). TWAM also regularly publishes smaller, curated datasets on third-party platforms including Flickr Commons (http://www.flickr.com/commons).

2. Research and early development

As part of the early research activity, the team familiarised itself with museum interfaces that were similarly focused on producing new models of collections discovery. Advice was received from museum professionals and researchers including the academic researcher and artist Mitchell Whitelaw, as well as Seb Chan (chief experience officer at the Australian Centre for the Moving Image). Two of the key insights from these conversations were:

- A serendipitous interface should not simply present random objects. Online audiences rarely engage with pure randomness. A novel presentation of objects still requires a conceptual scaffold.

- A novel interface designed to be more inclusive will alienate some audiences. Research audiences can react strongly against browse functionalities. The team needed to accept that what it produced would be inappropriate for some audiences accustomed to the concept of catalogue search.

Mitchell Whitelaw (2015) makes the distinction between traditional collections systems and generous interfaces for digital collections: “Search is ungenerous: it withholds information, and demands a query. (I argue) for a more generous alternative: rich, browsable interfaces that reveal the scale and complexity of digital heritage collections.”

An increasing number of generous collections interfaces are being developed, most notably New York Public Library’s visualisation of 187,000 public-domain artefacts, launched in January 2016 (http://publicdomain.nypl.org/pd-vizualisation).

Fox et al. (2006) state that creating user pathways is an important way of supporting explorations of complex information. The team resolved to trial the development of simple and generous pathways to TWAM’s vast, multifarious collections.

Research methodology

The process for the development of the interface was design-led research. This agile process rapidly created prototypes in the earliest design stages and used them as provocations put to stakeholders and potential users about how the final system should work. As the development progressed, stakeholders and potential users were consulted through a range of activities. In the early stages, this included:

- Design critique sessions in which designers proposed a concept and experts and stakeholders critiqued the ideas

- Guided walkthroughs in which designers and developers demonstrated and explained a prototype system to researchers and stakeholders

Later stages included cooperative evaluation sessions in which users interacted with the prototypes supported by designers and developers, and finally summative evaluation where end users interacted with the final version of the interface without support of designers or developers.

The iterative cycle was driven by the learning gathered from each user evaluation activity. As a result, the design moved incrementally closer to a viable product that met the needs of stakeholders and could be used without developer support.

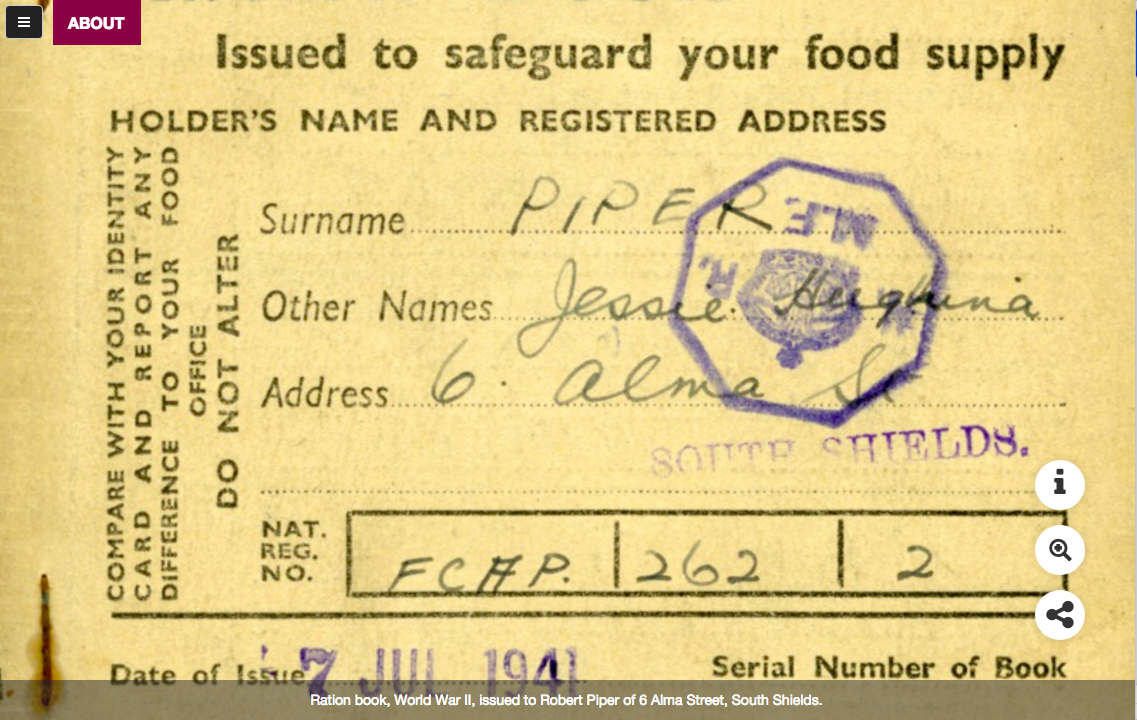

Not all objects are equal

TWAM has digitised over five hundred thousand objects from its collections. The objects records naturally vary in terms of the richness of their metadata. While it remains a longer-term ambition for TWAM, it was not within the scope of this project to improve data quality through crowd-sourced interaction.

Rather than produce an interface to accommodate all data, regardless of its perceived quality, the team resolved to work only with the data that enabled them to investigate the primary research question. A minimum requirement for records was that they contain higher-resolution images (small with a minimum 400 pixels height or width, and large 800 pixels height or width). This parameter filtered the data down to 32,592 records.

Focusing on visual richness was in part informed by successful collections interfaces discovered in the research phase. For example, Rijskmuseum’s acclaimed online collection (http://rijksmuseum.nl/en/explore-the-collection) is already well known and adopts the principle of image first, information secondary, where “the power of the image comes first” (Gorgels, 2015). In this site high-quality images are generously presented full screen.

Design concepts and principles

Bryant et al. (2012) emphasise that people’s motivation to interact with the Web in an exploratory or playful way is increased by providing not only the relevant search results; in essence, describing the effect of serendipity. However, a key design challenge identified within the team was how can a “generous” interface designed for serendipitous collections discovery still feel coherent to users? An open-ended exploration of vast sums of data should still make conceptual sense to users. As a result, it was agreed the first iteration of the prototype needed to be:

- Simple

- Visual

- Intuitive

- Immersive

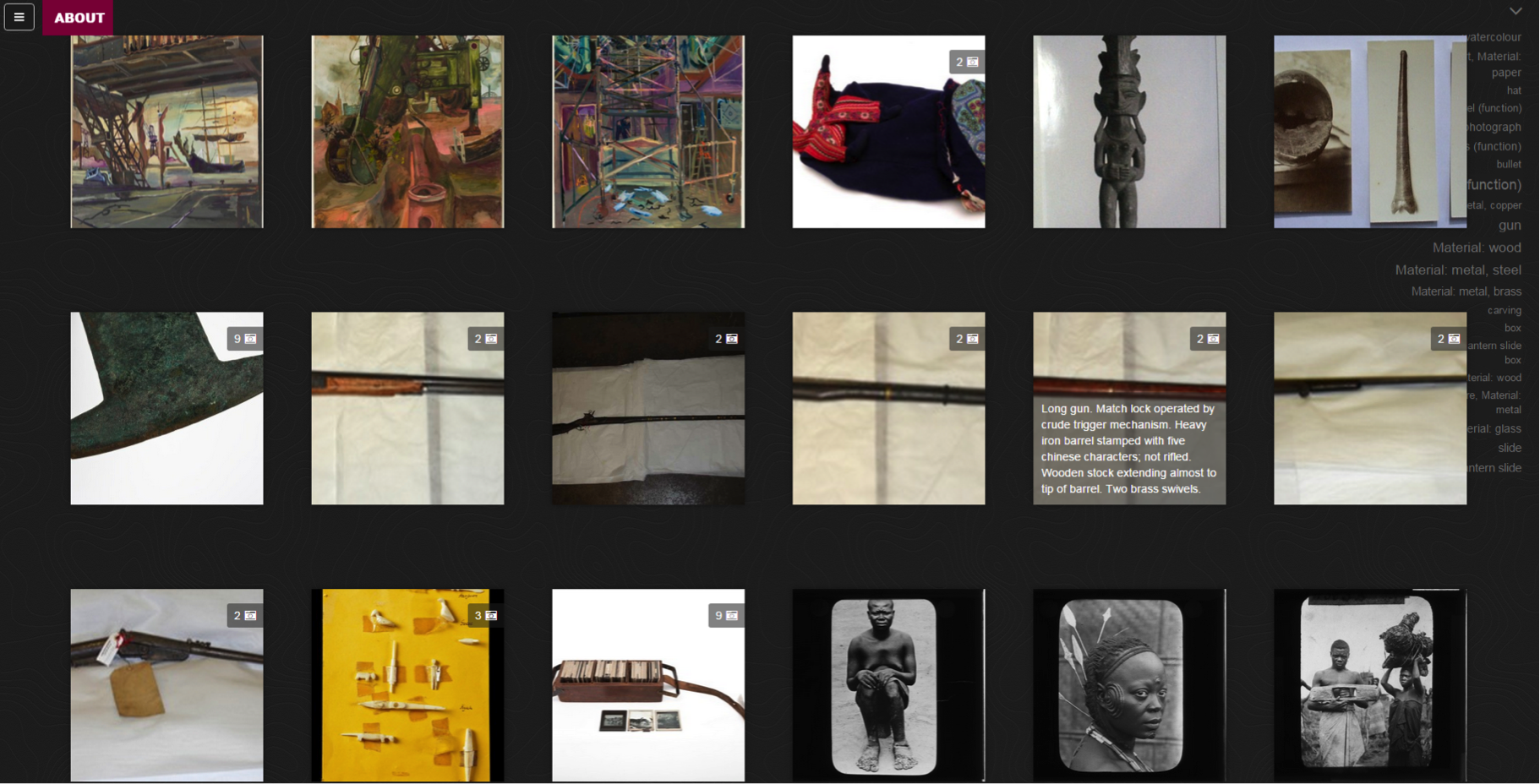

Conceptually, the experience was to enable audiences to lose themselves in the richness of the collections—to easily navigate visually rich and provocative information without having an initial search destination.

Stripping back search

To embody these principles, the core experience of the prototype was centred on a single mode of interaction, commonly used by online social platforms including Pinterest: infinite scroll.

Artefacts were immediately and visually presented on screen. Infinite scrolling enabled users to glide simply through the object data. Details of artefacts could also be zoomed in on. Free text search and multitudes of choice (drop-down lists, headers or menus) were omitted. The experience of journeying through the data was designed to engender curiosity—to provoke interest in what else could exist within the collections. This was not for users who knew precisely what they wanted to find. Further, it sought to gently introduce the idea of the scale and multidisciplinary complexity of TWAM’s data.

It was important that users understood the subsequent effect of their browsing actions. These were not randomly presented objects but an intelligent presentation of objects based on scroll speed. The interface interpreted and responded to three typical user behaviours:

- Engaged. Users scrolling slowly and frequently clicking objects are engaged. Related artefacts would thus be presented. Relatedness of objects was determined by collections types (social history, botany, earth sciences, watercolours).

- Moderately engaged. Moderate scroll speed and some clicking would yield loosely related results.

- Not engaged. Fast scroll indicated a lack of interest with what was on view. Objects would be randomised until scrolling slowed down and a starting point was identified by the user.

The first iteration of the feature to convey this action and effect took various forms, making use of colour, shape and movement.

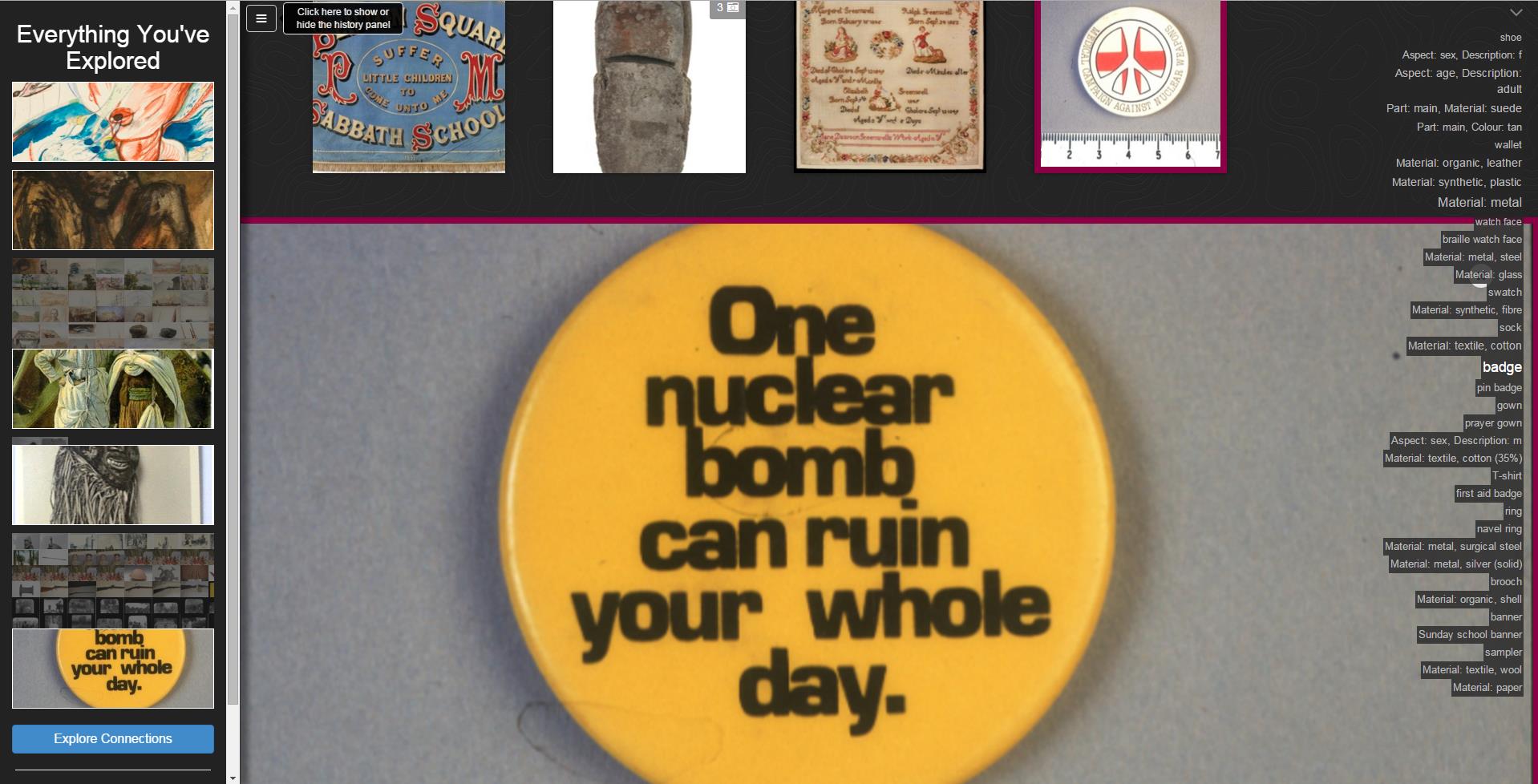

Autocuration

A secondary feature developed was a history side bar (described in the interface as “Everything You’ve Explored”). This listed every object the user clicked and spatially represented everything they skipped past, rendering an ongoing autocuration of their browse experience.

Technical overview

The prototype was built with Web-oriented languages (PHP, Javascript) using open-source libraries (JQuery, D3), which acted as clients to the RESTful interface that was developed. The System API provided two functions: presentation of the records from recommendation results and recording the navigation path taken by the user. Driving this API was a series of databases.

A software development kit (SDK) was produced that was compatible with the common data format (LIDO XML) aggregated by Culture Grid (http://www.culturegrid.org.uk) and Europeana (http://www.europeana.eu). The codebase is open source and reusable.

3. Usability testing and iteration

The prototype was exposed to two rounds of formative user experience (UX) evaluation. Formative evaluation elicits feedback from users in a format that can inform a design iteration of the prototype.

Study 1

Four women and two men (from twenty-four to forty-one years old) participated in the first round of UX evaluation (Study 1). All participants were cultural heritage professionals who expressed varying levels of prior experience and satisfaction in using online collections interfaces. Participants were asked to interact with the interface collaboratively, thinking aloud and sharing their impressions and expectations. As suggested by Monk et al. (1993) who provided a framework for collaborative evaluation, and Fisher et al. (2012) who evaluated an interface for exploration of large datasets, the thinking aloud technique was chosen due to its potential to gain deep insights into the users’ experiences. To this end, appropriate questions like “What do you think will happen if you click on this button?” or “What has the system done now?” were asked whenever possible to ensure that there was a relatively continuous dialogue (Monk et al., 1993).

After the interaction, participants shared their thoughts about the interface. Overall, there was a positive response to its design, although some aspects of it, including the effect of scroll speed on randomisation, were not felt to be intuitive. Once this had been explained and understood, participants enjoyed using this feature to browse for new objects.

Study 2

Changes were made to the prototype following Study 1’s findings, in particular on how scroll speed’s effect on relatedness of objects could be represented.

Study 2 invited seven women and five men (from twenty to forty-five years old) to individually test drive prototype version 2 for sixty minutes each. Collectively, they had significantly less experience of using online collections than the participants in Study 1. Some were broadly interested in museum collections and artefacts but had limited interest or ability in using text-search catalogues.

Following the examples of Dörk et al. (2012) and Zhang and Marchionini (2005), a mixed-method approach was chosen to collect both qualitative and quantitative data. 60-minute evaluation sessions were conducted, whereby each session was divided into three parts, starting with a 10-minute introduction, followed by a 20 minute test-drive, and a 30 minute feedback questionnaire.

The findings of studies 1 and 2 together showed that test users reacted positively to the prototype at both stages of development and felt it was innovative, clear, and seamless. Study 1 showed that test-users enjoyed the autocuration feature and were positive towards the more aesthetic presentation of objects.

The results of Study 2 showed that despite resolving some of the user complaints identified in Study 1, new problems had emerged from the addition of new functionality and features. For example, users wanted to explore the relationships between objects. They also wanted additional explanation of the system’s core scroll functionality.

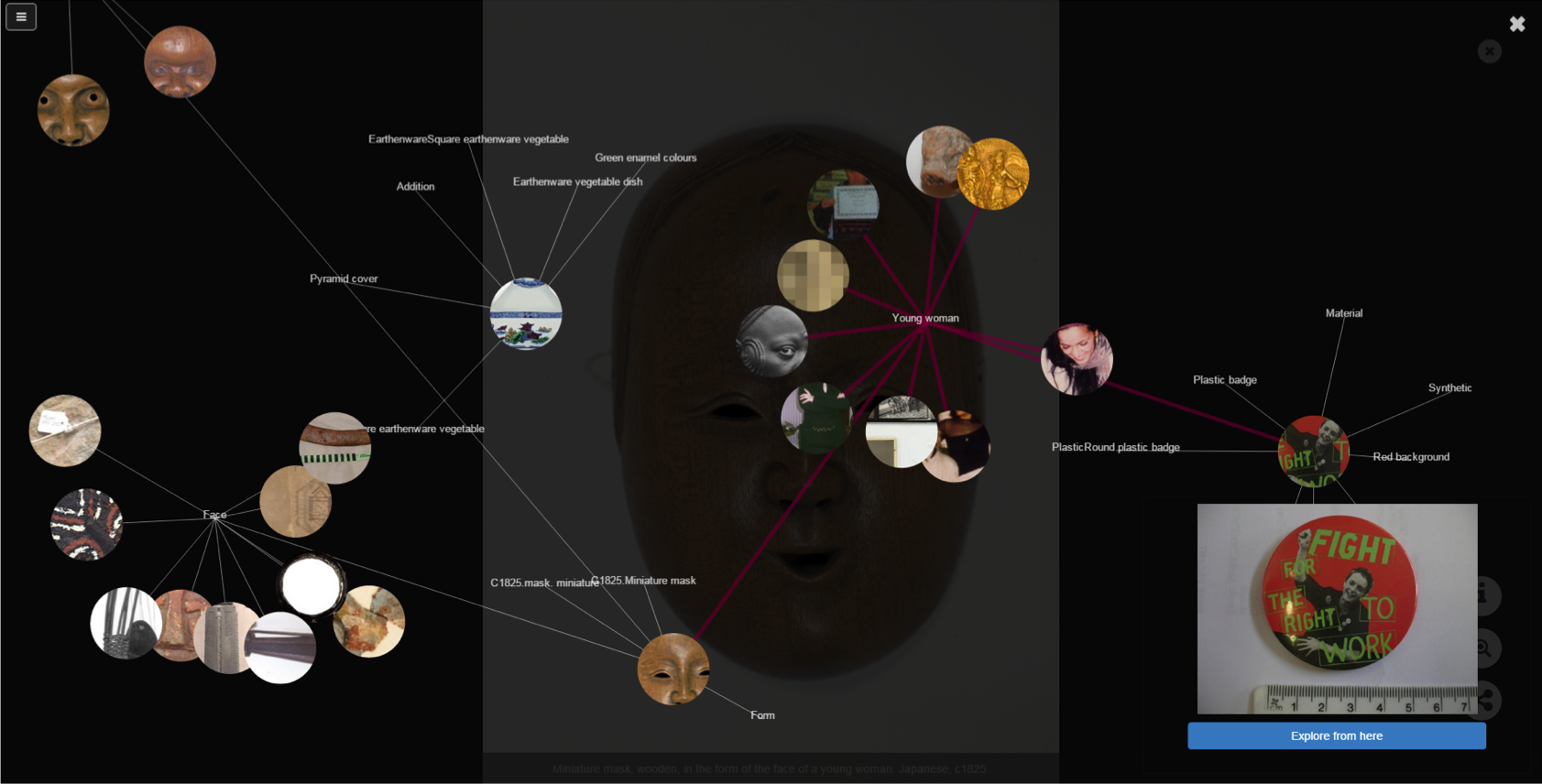

Design changes were incorporated into the final development of the system to address this. A map view of objects (“Explore Connections”) was produced that visualised semantic relationships (e.g., keywords) between diverse artefacts. For example, “Young woman” renders results from collections including Art, Social History, and Egyptology that would not ordinarily be curated together physically or digitally. Dörk, Carpendale, and Williamson (2011) used the metaphor of the “information flâneur” to describe this mode of meandering, serendipitous engagement with complex data rendered as visualisations that reveal its unexpected connectedness.

Figure 5: prototype of “Explore Connections,” a map visualisation of objects and semantic relationships including those centred around the phrase “Young woman”

Supplementary features were also developed, including a sidebar of object keywords automatically generated alongside visual object scroll to provide textual context for their display.

4. Launch

The developed interface was intended to be an alternative mode of online collections discovery. It served as a supplement to the existing search catalogue and was not a replacement. A conceptual distinction was created between both to prompt users into adopting one broad mode of discovery:

I know what I’m looking for- Let me search (linked to standard collections search catalogue)

I don’t know what I’m looking for- Let me dive in (linked to R&D System titled Collections Dive)

The interface went live for testing on http://collectionsdivetwmuseums.org.uk from May 2015, and following final bug fixes it was marketed to the public from July 8, 2015. It was accessible through the main http://www.twmuseums.org.uk site, as well as its nine individual museums and galleries’ sites.

Two parallel online surveys were available to users on the site for one month from July 2015. A three-question pop-up survey appeared automatically after forty-five seconds had elapsed in a session. A more detailed eight-question survey was accessible to users through the site’s About page.

Summative evaluation was conducted using logged data and Google Analytics, with a qualitative and quantitative analysis of user data to gain insights into behaviours.

Additionally, museum professionals were invited to offer critical feedback to the interface from a UX perspective and also in terms of their museum’s potential interest in adopting it.

A software development kit (SDK) was published in August 2015. The SDK comprised a series of both documentation and codebase that could be accessed via the Github platform (https://github.com/digitalinteraction/past-paths).

5. Results

The results presented are based on site usage data gathered from its launch on July 6, 2015, to January 6, 2016. Only one month of surveys were collected, during July 2015.

The data was cleaned to focus on behaviours exemplified in meaningful user sessions. Sessions that lasted less than six seconds or more than one hour or contained no interaction from the user at all (e.g., scroll, share, zoom) were excluded from further analysis. The durations of these remaining sessions were calculated by measuring the time from the start of the session to the user’s last action. These exclusion criteria resulted in a reduced set of 9,125 meaningful sessions and 7,444 unique users.

The mean duration of the 9,125 sessions was 3.17 minutes with a median of 0.7 minutes. A more detailed analysis revealed that 86 percent of sessions lasted between six seconds and five minutes, 70 percent lasted less than one minute, 11 percent lasted between five and twenty minutes, and 3 percent lasted between twenty minutes and one hour.

It is problematic to compare this data with the equivalent data on the TWAM object search catalogue (http://collectionssearchtwmuseumsorg.uk). However, on this object catalogue over the same time period there were:

- 3,386 users

- 4,564 meaningful sessions, compared to the 9,125 meaningful sessions on Collections Dive

- Average of 4.28 objects viewed

- Average dwell time of 4.4 minutes

The number of users and sessions was higher on the Collections Dive interface, and the object catalogue experienced a higher dwell time, but it is difficult to draw meaning from this. Given there were two choices presented to users on the initial collections search landing page (I know what I’m looking for- let me search and I don’t know what I’m looking for- let me dive in) it would arguably be artificial to compare the behaviours of audiences who express fundamental differences in their search preferences. Two assumptions might be that an object catalogue user is more committed to dedicating time into searching; and a Collections Dive user is curious but has no particular search request in mind, and is thus more likely to abandon the experience more quickly if it does not interest them.

Another potential factor on why fewer users engaged with the search interface is that during this trial it was undergoing redevelopment. While it remained fully functional throughout this six month period, it was aesthetically stripped back and unlikely to inspire audiences who were not willing to engage with a text search box.

Non-engagement

For 1,931 (21 percent) sessions, there was no significant engagement with the collections. In these sessions, there was no record of the user having scrolled to view items beyond those presented immediately on diving into the collection. Nor was there any record of the user having selected items, zoomed on items, etc.

Deeper engagement

For the remaining 7,194 (79 percent) sessions, the team identified three levels of deeper engagement, Cruising, Digging, and Sharing. These involved deliberate interaction between the user and the system.

An analysis of usage data enabled the team to broadly interpret typical behaviour types:

Cruising the collections

Of the 9,125 sessions 2,773 (30 percent) involved the user scrolling through collections but not engaging with any of the special features (including map view, history bar, and social sharing). These sessions involved between 1 and 224 scroll events. Sessions lasted a mean time of 3.3 minutes and a median of 1.0 minutes.

Digging into collections

Of the 9,125 sessions, 2,953 (32 percent) involved not only scrolling but also some form of deeper engagement with the collection. This involved a mean of 8.4 additional deeper actions per session with a median of 4 per session. Sessions lasted a mean of 4.8 minutes with a median of 1.8 minutes. This included a range of additional actions such as moving to map view. Below is a summary of the three most relevant actions:

- Selecting. On 2,506 (27 percent) sessions, the user clicked on at least one item in the collection. An average of 2.9 items were selected during these sessions.

- Zooming. On 2,341 (26 percent) sessions, the user zoomed in on one or more items. The average number of zoom events was 2.7 per session.

- Getting Information. Once an item has been selected, an option for reading text information about that object becomes available. Users requested information in this way on a total of 1,244 (14 percent) sessions. The average frequency of request was 1.6 per session.

Sharing collections

Of the 9,125 sessions, only 209 (2 percent) sessions included at least one item or a set of items from the history sidebar being shared (via social media or copied links).

Of these 209 sessions, 106 involved the user sharing their history sidebar.

User experience surveys

Ninety-six surveys were completed in total, which was proportionately small compared to the number of users to the site. It is important to contextualise any insights from this feedback against the larger qualitative datasets extracted.

- Three-question pop-up survey

Fifty pop-up surveys were completed during the trial. Of respondents, 72 percent said they were exploring surprising things, 72 percent said they were enjoying the experience, and 60 percent said they would visit the website again.

- Eight-question survey

Forty-six surveys were completed. The average age of respondents was 29.3 years, with a range of from 19 to 66 years old.

This included feedback on the user experience and suggestions for technical improvements. Problems identified by users were primarily around understanding the cause and effect of scroll speed and frustration with the absence of text search. The intention to generate intrigue through a visual and immersive presentation of objects was cited as enjoyable by some but as bewildering or irritating to others: “It’s too immersive, too quickly!” Sixty-seven percent of users said they were surprised by the types of object that they found in the collection. An example survey response indicated:

“I didn’t know that the museum had so many great things—there were some really weird mud masks and (objects) connected (to them).”

Sector response

There has been some early interest from cultural heritage professionals in the SDK and their potential application of the interface, as well as considerable interest in the insights gleaned around user behaviours within this type of interface. A range of comments collected via professional networks including Museums Computer Group expressed both enthusiasm for the project and its future application. In addition, technical fixes and user experience changes were recommended. Comments from sector professionals included:

“Great fun- the sector has needed something like this for a long time.”

“I love the presentation of the artefacts and the exploration of the connections! Totally respects the archival provenance and hierarchical structure.”

“The ‘Explore Connections’ facility was the core facet of the project for me. The ability to show relational connections generated via tags or metadata seems to me to be a really important thing to be trying to do.”

“The site doesn’t explain why some objects are presented together and it’s easy to get lost in a sea of related objects.”

“I still find myself yearning for a search box and typing things that spring into my mind when I see something interesting and wonder what else there might be, especially if the results came back so richly displayed.”

“The biggest challenge, to me, in re-curating collections in a more data-centric [or semantic] way, is helping museum people make the connections revealed by projects like yours in the first place.”

Audience insights

The team was able to glean audience insights from the gathered user data and surveys. It is important to stress, however, that these insights represent only audiences interacting with the team’s embodiment of a collections browse experience. We are unable to make sector-level assumptions about browse versus search, beyond this interface. For example, audiences who were disengaged with the interface are not necessarily audiences who dislike open-ended browse.

Regardless, with the available data there were clear differences among user behaviours, engagements, and expectations of what a collections website is for.

The project demonstrated that the highly visual browse interface was a successful way of increasing audience engagement with TWAM collections. Almost 70 percent of audiences stated this style of presentation was the primary reason they enjoyed the experience. They liked casually encountering thematically varied objects at once and stated that they engaged with objects they would not have searched for otherwise.

A second audience wanted a lot more control over the experience and desired more explicit information explaining why they were seeing what they were seeing. The playful scroll speed functionality was either unclear or deemed inadequate for their search journey. Furthermore, the aesthetic and immersive presentation of the artefacts obstructed their primary quest for object information.

A third audience reacted positively to the novel presentation and discovery functionalities but wanted tighter search controls (e.g., text box) as an eventual part of the experience, once they had stumbled across content that provoked specific search queries.

Each of these three broad audience types included both users with previously high and low levels of experience in using collections interfaces.

Perhaps predictably, the data reinforced the view that collections engagement is all relative. Depth of engagement in the search catalogue interface would ordinarily be interpreted as the extent to which users probed multiple individual objects for detail. Certainly, this form of engagement was visible from the data. However, a large number of users were happy to spend prolonged periods of time browsing hundreds of artefacts collected on screen together, hovering over text captions for context, without ever diving into individual objects for more detail. For this audience, it seemed the collections interface was an holistic experience and an encounter with a rich collage of juxtaposed artefacts. In contrast, for other audiences who simply wanted to find the object, the interface was only a tool to conduct their enquiry.

The dwell time data and a large proportion of the survey responses suggested an infinite scroll presentation was effective in manufacturing intrigue around what else there is whilst easily enabling its retrieval. A number of user comments—“I can’t stop scrolling!” and “I cannot tear myself away from this”—were testament to this.

Perhaps the most revealing insight was that almost twice as many visitors accessing the collections search pages on TWAM’s websites identified themselves as audiences who don’t know what they’re looking for. Regardless of whether the interface satisfied their need or not, the click-through data indicates that a substantial online audience exists that desires collections engagement without a specific search request in mind. The responsibility therefore is with the museum to create user-centric experiences that propel casual, curious audiences into and through the collection.

Audiences enjoyed the sense of miscellany with the objects presented. The visual juxtaposition of very different themes and artefacts was cited as compelling by multiple users. There was not one instance of feedback from users wanting only one type of collections content, even from those who arrived at the interface via a collections-specific venue’s website (such as art audiences entering via the Laing Art Gallery website).

Despite over 80 percent of participants in the user evaluation stages stating they desired social sharing functionality, only 2 percent of sessions following launch included at least one item being shared.

Collections insights

Some of the user comments had wider-reaching recommendations about the quality of TWAM’s collections data. Most audiences were satisfied with the data that was presented. However, some reflected on data issues as interface issues: minimally completed records were not interesting, plainly descriptive keywords were not provocative enough (painting, military vs. grief, danger), badly composed and pixelated photographs of objects, and distinct records with duplicate images were commented on as problems with the interface.

An online collections experience, whether novel or traditional, will ultimately succeed or fail as a direct result of the quality of the data it presents. A recommendation the team have begun to explore internally with TWAM curatorial and collections staff is in emphasising that the digital user experience begins at the moment the data collection and data entry process starts. TWAM is currently investigating how insights from the project can positively influence practice at TWAM, and it has been raised at subsequent curatorial meetings. A bespoke workshop is being developed with museum staff to explore the impact of quality collections data on quality digital user experiences. It will emphasise the public value of fewer, richer records over more numerous but relatively sparse records.

6. Future iterations

The team have identified a number of design changes, technical improvements and potential expansions of the system that would be considered for a next round of development. This includes connecting the front-end interface to a live collections data source; exposing objects to organic search to engage broader online audiences via search engines; and significantly improving mobile functionalities (despite being mobile responsive and tested on mobile, user feedback identified improvements to be made).

Research has also been proposed into adapting the system to more intelligently curate objects in response to ongoing cumulative user data. In theory, the system could make assumptions about compelling and non-compelling artefacts by responding to the types of objects that typically feature in prolonged user sessions and the objects that typically feature in sessions quickly terminated (e.g., visualisations of social history records are more effective than geology records in provoking user journeys). The data that will allow us to interpret the likely impact of an object or collection does exist and is continually being gathered from user sessions.

While this was an experimental R&D project primarily concerned with better understanding audiences and their relationship with collections discovery, it did result in a functioning collections interface that will continue to be maintained and kept live for the foreseeable future. TWAM has no in-house web team and subsequently, any technical development will require further funding. TWAM and NU are interested in identifying R&D funding to support a next iteration of the system in response to the insights gleaned to date and to ongoing public usage data.

TWAM R&D interface: http://collectionsdivetwmuseums.org.uk

Project page: http://artsdigitalrnd.org.uk/projects/tyne-wear-archives-museums/

Project software development kit: https://github.com/digitalinteraction/past-paths

Acknowledgements

Nesta, AHRC, and Arts Council England for funding and supporting the project.

The project partners and the staff at Tyne & Wear Archives & Museums, Newcastle University, Microsoft Research and Collections Trust.

Respondents from the Museum Computer Group network.

Mitchell Whitelaw and Seb Chan for advice and guidance.

Alexander Wilson at Newcastle University for providing photography of user studies.

References

Bryant, E.M., R. Harper, & P. Gosset. (2012). “Beyond Search: A Technology Probe Investigation.” In D. Lewandowski (ed.). Web Search Engine Research. Emerald Library and Information Science, 227–250.

Coburn, J., P. Wright, & R. Harper. (2015). “Tyne & Wear Archives & Museums: A novel interface to digital collections Research and Development Report.” London: Nesta.

Dörk, M., S. Carpendale, & C. Williamson. (2011). “The information flaneur: A fresh look at information seeking.” In CHI ’11: Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, ACM 2011, 1215–1224

Dörk, M., C. Williamson, & S. Carpendale. (2012). “Navigating Tomorrow’s Web. From Searching and Browsing to Visual Exploration.” ACM Transactions on the Web 6(3), article 13.

Fisher, D., I. Popov, & M. Drucker. (2012). “Trust me, I’m Partially Right: Incremental Visualization Lets Analysts Explore Large Datasets Faster.” CHI ’12, May 5–10, 2012.

Fox, E.A., F.D. Neves, X. Yu, R. Shen, S. Kim, & W. Fan. (2006). “Exploring the Computing Literature with Visualization and Stepping Stones & Pathways.” Communications of the ACM 49(4).

Gorgels, P. (2014). “Reaching the Modern Visitor.” International Council of Museums New 67, 12–13.

Monk, A., P. Wright, J. Haber, & L. Davenport. (1993). “Improving your human computer interface: a practical technique.” BCS Practitioner Series.

Poole, N. (2013). “Big Cultural Data.” Available http://www.collectionstrust.org.uk/past-posts/big-cultural-data

White, R.B., B. Kules, & S.M. Drucker. (2006). “Supporting Exploratory Search.” Communications of the ACM 49(4): 37–39.

Whitelaw, M. (2015). “Generous Interfaces for Digital Cultural Collections.” Digital Humanities Quarterly 9(1).

Cite as:

Coburn, John. "I don’t know what I’m looking for: Better understanding public usage and behaviours with Tyne & Wear Archives & Museums online collections." MW2016: Museums and the Web 2016. Published January 29, 2016. Consulted .

https://mw2016.museumsandtheweb.com/paper/i-dont-know-what-im-looking-for-better-understanding-public-usage-and-behaviours-with-tyne-wear-archives-museums-online-collections/